It’s being widely reported this weekend that a former engineer involved in the Volkswagen diesel scandal, James Liang, has been sentenced to a 40-month prison term. I’ve seen a bit of shock and outrage on Twitter over this, including a quickness to conclude that Liang was sent to prison for just doing something his boss told him to do. It seemed to me that there are some out there that have bought into the school of thought that if you’re ordered to do something, your butt should be totally covered. As a software engineer, this is a belief I would like to speak against and dispel for the good of myself and my craft.

Background

If you’ve been living under a rock, or just aren’t quite sure what the emissions scandal was about, this summary from the Car and Driver Blog1 does a good job of summarizing what happened:

Volkswagen installed emissions software on more than a half-million diesel cars in the U.S.—and roughly 10.5 million more worldwide—that allows them to sense the unique parameters of an emissions drive cycle set by the Environmental Protection Agency. […] [T]hese so-called “defeat devices” detect steering, throttle, and other inputs used in the test to switch between two distinct operating modes.

In the test mode, the cars are fully compliant with all federal emissions levels. But when driving normally, the computer switches to a separate mode—significantly changing the fuel pressure, injection timing, exhaust-gas recirculation, and, in models with AdBlue, the amount of urea fluid sprayed into the exhaust. While this mode likely delivers higher mileage and power, it also permits heavier nitrogen-oxide emissions (NOx)—a smog-forming pollutant linked to lung cancer—up to 40 times higher than the federal limit.

To elaborate slightly: This means there was a group of people at Volkswagen that installed software specifically designed to lie to the test devices. The result of this lie is that there were (and probably still are) cars on the road that emit an excess of a chemical linked to lung cancer.

The one qualm that I have with Car and Driver’s depiction is their use of the word “installed.” Please excuse me if I’m giving the average reader of my blog too little credit, but I want to make it clear to everyone that installing this kind of software isn’t anywhere near the same thing as installing Microsoft Office on your PC. You’d be hard pressed to find pre-written emissions defeat software anywhere online, much less software that just happens to work with the new car engine you’re designing.

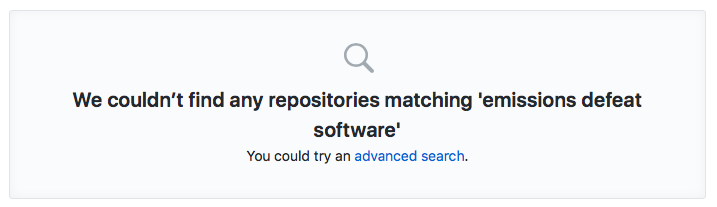

I actually searched for “emissions defeat software” while writing this blog post. The results are best summarized by this screen shot from GitHub’s project search screen:

Why does this matter?

To install this software in their cars, it means that Volkswagen had to design the software first. Liang’s own admissions2 corroborate this (empahsis mine):

According to Liang’s admissions, when he and his co-conspirators realized that they could not design a diesel engine that would meet the stricter U.S. emissions standards, they designed and implemented software to recognize whether a vehicle was undergoing standard U.S. emissions testing on a dynamometer or being driven on the road under normal driving conditions (the defeat device), in order to cheat the emissions tests.

The design element of this scandal is the element I find most troubling. It means that there was an ongoing, intentional, directed effort to subvert U.S. law by the leadership and engineering teams at VW. There were likely planning meetings, task tracking of some sort,3 deadlines, and concrete deliverables that these teams agreed on in advance. Throughout this process, there was no voice that prevailed that said “Hey, perhaps this isn’t okay.”

Just an “Engineer”

The piece that I’ve seen circulating the most is a piece from Reuters that characterizes Mr. Liang as a “former engineer.”4 The reaction I saw is nicely summarized by the Tweet below:

Every. Single. Software developer. Must. Take. Note.

— Ted Neward (@tedneward) August 25, 2017

YOU can go to jail for the code YOUR BOSS tells you to write. https://t.co/3MZGHqI4dy

I saw a few retweets and other takes that had about the same flavor to them. This will be news to nobody, but “Oh man, that could be me” is a visceral fear that’s hard to combat. If I had to guess, I’d say this fear is motivating many of the reactions I’ve personally seen.

However, based on my reading of the Reuters article, the relevant Justice Department press release, and some well-educated guesses informed by my professional experience, I think the tweet above is largely a false equivalence. It paints the picture that Liang was convicted for just writing some code.

It’s unlikely that every single software engineer at Volkswagen had the level of involvement it seems Liang exercised over Volkswagen’s diesel (non-)compliance. Indeed, the Justice Department release from 20162 states: “Liang admitted that during some of these meetings [with regulators], which he personally attended, his co-conspirators misrepresented that VW diesel vehicles complied with U.S. emissions standards and hid the existence of the defeat device from U.S. regulators.” Liang here is directly complicit in misleading U.S. regulators. At the risk of over-generalizing my own experience, I’ll state that folks who are front-line engineers are rarely in the room with regulators.

Yet, I want to set aside that false equivalence and discuss something more important. Even if Liang himself is not a member of the population Ted Neward’s tweet above appeals to, the front-line software engineers, do we really believe they should be totally free from responsibility in this scandal?

The Ethics of Engineering

I, like many of my contemporaries, strongly prefer the job title of “Software Engineer” to describe myself over the practically interchangeable term “Software Developer.” I don’t see my job as developing software. My job is to solve problems, and I will most often use or develop software to do so.5

I cannot know what happened in the back rooms at VW. Perhaps ethical concerns were raised. Perhaps jobs were threated by management. Perhaps every front-line engineer involved had defendable reasons why they couldn’t resign their position on ethical grounds and ultimately helped create the deception that lead to the sentencing of Mr. Liang and others.

I would not fault someone for doing the wrong thing for the right reasons. I likewise do not think that the front-line software engineers at VW should be held legally responsible in this specific case. In spite of that, however, I doubt the ethical intelligence of anyone who would suggest, without further exonerating evidence, that those individuals bear no moral responsibility for the deception and health risk they helped create.

In our roles as problem solvers, whether or not someone is paying us for the work we’re doing, we are ultimately responsible for whether or not our solution to the problem at hand will make the world a better place. This conviction is what drives the conscience of the ethical engineer - software or otherwise - and it plays out in our decisions big and small.

This conviction is simultaniously burdensome and empowering.

It is burdensome when we’re at odds with whatever organization we’re currently employed by and we’re asked to do things that go against our ethical code. It is burdensome when we’re told doing the right thing is too expensive and we should just do the cheap thing and be happy. It is burdensome when our peers or bosses reply, “But everyone else is doing X, so you should just get over your objections and implement X!” Or, worse still, when those in power threaten to fire us if we do not captiulate.

Yet even in burdensome organizations, this conscience is empowering when it motivates us to do even the smallest things to make the world better. It empowers us to speak up in the meeting and say, “I disagree with this.” It empowers us to work to ensure that the new app we’re building is accessible to someone using a screen reader. It empowers us to help customer support execute more informed, coordinated responses when things go wrong. It empowers us to go to the extra effort of making our code easier to understand for the next engineer who comes along. It empowers us to work to protect end-user user information of our own accord instead of waiting for a security event to force our hand.

We all have the choice of whether or not to live in line with this conscience as we go about our problem solving. I have good days and bad when it comes to listening to my own convictions about the right thing to do. Even still, the truth is that we’re uniquely empowered to make the world a better place every day, by a thousand paper cuts if we’re so forced. On those occasions when we’re lucky to work with an organization that backs ethical efforts, the possibilities for improving the world we’re in are endless.

But this is not the work of those happy to punch a clock and write off ownership of what they’ve done. We have to first take up our mantle of responsibility as engineers, or else we will squander our unique, individual opportunity to make the world better by ethically doing what we do well.

Footnotes

- You can find that blog post here here.

- Justice Department Press Release, 9/2016

- I find myself idly wondering if they were using JIRA, though I kind of doubt it. It would just be really amusing to me if they were because it seems like everyone uses JIRA. I also find myself idly wondering if the people who could reason their way to committing fraud like this may understand JIRA better than I do. Really, I just find myself confused at JIRA a lot these days and I wanted to vent about that a little.

- Reuters article here

- When I’m really lucky I’ll solve problems by deleting software. Those are good days.